Typically when I talk with clients about storage metrics for performance, they will typically focus on either bandwidth or “IOPs”. But really there are three dimensions of storage performance! Let’s consider these three storage performance metrics and how we can design systems to work around shortcomings.

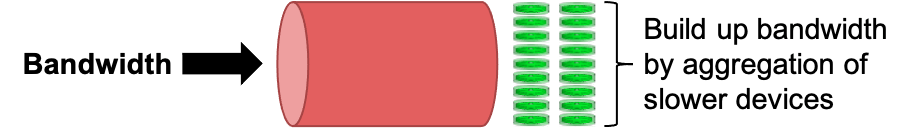

First, there is bandwidth (or throughput). This is a measure of how many bytes can be moved in one second, and these days is typically measured as GB/s. How well a particular storage system will perform depends on the actual workload – typically workloads consisting of large I/O transfers will have higher bandwidth than workloads consisting of small I/O transfers. With a parallel file system, we can always aggregate more devices to make bandwidth be an arbitrarily large enough – presuming we also have enough application threads to drive that bandwidth! (Calculating the bandwidth with traditional RAID is described in Modeling disk performance (traditional RAID).)

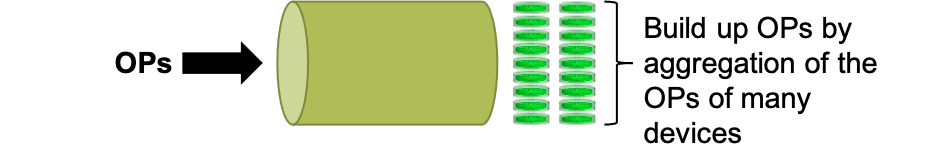

Second, there is operations per second (or OPs). Storage vendors often like to quote “IOPs” but this can be very misleading – usually this is measured as 4k I/O transfers per second. Very few real workloads use such small I/O transfers! Application needs are also often expressed as “IOPs”. Here the term has even less clarity – it certainly isn’t 4k I/O transfers per second, but what exactly does it mean? A more meaningful number is how many POSIX operations (of some flavor and size) can be done in a second – in particular, how many files can be created in one second? Yet this not frequently available. Generally we associate a higher OPs value with “faster” storage – yet just like bandwidth, we can aggregate multiple storage devices to achieve higher potential OPs values. However, just like with bandwidth, there needs to be enough threads driving the storage workload to actually benefit from the aggregate OPs value. Too often I see attempts to aggregate disks to achieve higher OPs values, but not have the parallel workload needed to take advantage of this aggregation.

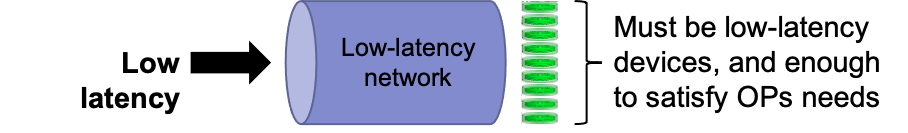

The final consideration is the storage latency. This is how long a single I/O operating takes to complete. The underlying storage device, the network, network and storage adapters, and even the software all play a role in determining the latency. Workloads that involve human interactions, or that require short transactions, require low-latency storage. Unlike bandwidth and OPs, the latency can not be reduced through aggregation. If latency is too great, it will be necessary to use faster underlying storage, consider a lower-latency network, or improve the software. Introducing a cache can help with latency – if large enough, a fast cache will improve write performance. Read performance can also be improved with a cache, but you need a strategy for dealing with cache misses.

Understanding these storage metrics, and what your workload requires, is important to developing a satisfactory design. Parallel file systems can use aggregation to deal with deficiencies in bandwidth and OPs, but only if there are sufficient threads to leverage the aggrgation. A low-latency cache can allow transactional and interactive workloads to use high-latency bulk storage, but only if the cache is large enough for the working set, and if cache misses will not be a problem.